The next frontiers in AI beyond scaling

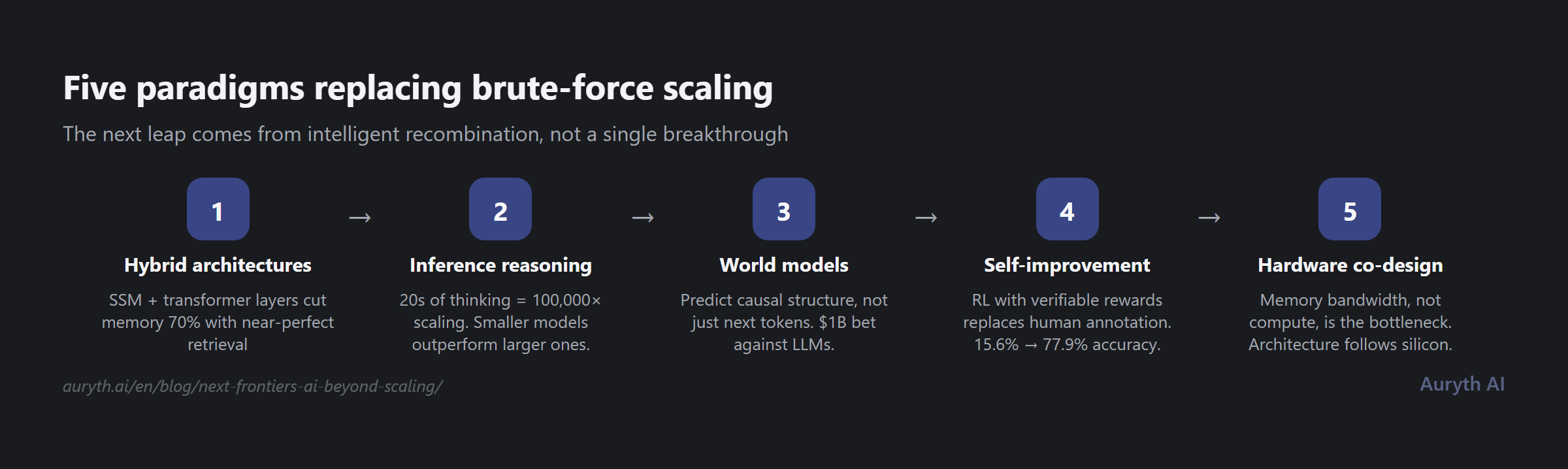

The era of making models bigger is ending. Five paradigms are replacing brute-force scaling — hybrid architectures, inference-time reasoning, world models, self-improvement loops, and hardware co-design. What each means for AI in regulated industries.

By Auryth Team

The architects of AI scaling now say their own paradigm is ending. Ilya Sutskever, whose work on scaling laws shaped the last five years of AI research, told The Decoder that “we’re moving from the age of scaling to the age of research.” Yann LeCun left Meta to bet over a billion dollars that large language models are a dead end. Even Dario Amodei refuses to assume that spending more on compute guarantees progress.

This is not an abstract debate. If you work in a regulated industry — tax, legal, compliance — the next generation of AI tools you rely on will be shaped by whichever paradigm wins. Understanding what’s coming helps you evaluate what’s real and what’s hype.

The short version: five distinct approaches are replacing brute-force scaling, each attacking a different limitation of current systems. None will win alone. The next leap comes from their combination.

Hybrid architectures are quietly replacing pure transformers

The transformer architecture that powers every major language model today has a fundamental problem: self-attention scales quadratically with sequence length. Double the context window, quadruple the compute. This worked when models processed a few thousand tokens. It breaks when you need to search across entire legal codebooks.

The fix isn’t replacing transformers — it’s diluting them. Production systems from IBM, NVIDIA, and AI21 now interleave transformer attention layers with state-space model (SSM) layers. AI21’s Jamba uses one attention layer per eight total. IBM Granite 4.0 runs one in ten. NVIDIA’s Nemotron-H drops to roughly 8% attention.

The results matter for professional applications: 70% less memory consumption, two to five times higher throughput, and near-perfect document retrieval even with minimal attention layers.

Why not go to zero attention? Because a 2025 ablation study found that removing all attention layers drops retrieval accuracy to literal zero. SSM layers contribute nothing to retrieval on their own. But you need far fewer attention layers than anyone assumed — as few as three suffice for reliable retrieval in a 50-layer model.

For retrieval-augmented generation systems like the one powering Auryth, this is directly relevant. Hybrid architectures mean faster search across larger legal databases, longer effective context windows for cross-referencing provisions, and lower inference costs per query — without sacrificing the precision that authority-weighted retrieval requires.

Inference-time reasoning trades thinking time for model size

The most important scaling law discovered recently isn’t about training. It’s about inference.

Noam Brown at OpenAI put it bluntly: letting a model think for 20 seconds on a poker hand delivered the same performance boost as scaling the model by 100,000 times and training it 100,000 times longer. The foundational paper by Snell et al. demonstrated that optimally allocated test-time compute lets smaller models outperform much larger ones in matched evaluations.

OpenAI’s o-series models use large-scale reinforcement learning to generate internal chains of thought — including backtracking and self-correction. Their o4-mini achieves 99.5% on mathematical competition problems at roughly 30% the cost of o3.

But there are two hard ceilings. The cost ceiling: complex queries can require over 100 times the compute of a single pass. The wall-clock ceiling is subtler and more consequential — when evaluations take three weeks because the model needs three weeks to think, time itself becomes the bottleneck.

The research shows no single reasoning strategy universally dominates. Self-consistency (majority voting) plateaus regardless of additional compute. Process reward models that provide per-step feedback are 8% more accurate and up to five times more compute-efficient. Monte Carlo tree search variants dynamically decide whether to explore new candidates or refine existing ones.

For confidence scoring in professional contexts, this has direct implications. A system that can invest more thinking time on ambiguous queries — and report when its confidence is low — is fundamentally more useful than one that processes every question identically. The challenge is making this computationally tractable for real-time professional use.

World models and neurosymbolic systems target reasoning itself

The deepest divide in AI research today pits language model scaling against fundamentally different approaches to understanding.

LeCun’s new venture, AMI Labs, raised $1.03 billion at a $3.5 billion valuation — with NVIDIA, Bezos, and Samsung among investors — to build systems based on Joint Embedding Predictive Architecture (JEPA). Instead of predicting the next token, JEPA predicts abstract representations of reality. The goal is causal understanding rather than statistical pattern matching.

LeCun’s position is unambiguous: “If you are interested in human-level AI, don’t work on LLMs.”

The pragmatic counterargument comes from systems that pair neural networks with formal verification. DeepMind’s AlphaProof, published in Nature, combines a language model with reinforcement learning to prove mathematical statements in the Lean formal language. At the International Mathematical Olympiad 2024, it solved problems that only five human contestants managed — and every proof was verified by the theorem prover. No hallucinations possible.

This matters for legal AI because it demonstrates a viable architecture for provably correct reasoning. A system that can formally verify its own conclusions against a legal knowledge base — not just retrieve and summarize, but prove logical consistency — would represent a qualitative leap over current RAG approaches.

François Chollet’s ARC-AGI benchmark continues to expose the gap. The best commercial model scores 37.6% on tasks that require genuine reasoning rather than pattern matching. Chollet’s central insight: “Current frontier AI reasoning performance remains fundamentally constrained to knowledge coverage.” Human reasoning generalizes beyond training data in ways current systems do not.

Self-improvement works but has hard limits

DeepSeek-R1 demonstrated something striking: applying reinforcement learning directly to a base model with only correctness-based rewards — no supervised fine-tuning, no human demonstrations — produced a model that spontaneously developed self-verification and reflection. Pass rates on mathematical competition problems jumped from 15.6% to 77.9% through pure reinforcement learning.

But the same paper revealed a critical limit: reinforcement learning on smaller models “simply cannot compete with distillation from a more capable teacher.” A distilled 32-billion parameter model outperformed larger models doing their own reinforcement learning from scratch.

Pure self-bootstrapping has a ceiling. You need a strong teacher first. This challenges the most optimistic visions of recursive self-improvement while validating a more nuanced path: large frontier models generate verified reasoning traces, which are distilled into smaller models, which serve as the foundation for the next round of improvement.

The broader post-training revolution has shifted from human preferences to verifiable rewards. Math correctness, code execution, formal proof systems — these replace expensive human annotation with automated verification. This is the same principle behind Auryth’s confidence scoring: verifiability over model self-assessment.

Model collapse — degradation when models train on their own outputs — is real but manageable. The critical finding: if you keep original real data in the training mix, collapse is avoided. With over 74% of newly created web pages now containing AI-generated text, data curation has become as important as the training algorithm itself.

Hardware is the hidden hand shaping every architecture

Transformers won because of GPUs, not despite them. Self-attention is dense matrix multiplication that achieves 80–90% hardware utilization. SSMs, with their recurrent operations, initially peaked at 10–15% utilization — making them slower in practice despite better theoretical scaling.

The memory bandwidth wall has become the dominant constraint. Language model inference is memory-bandwidth-bound, not compute-bound. This is why Cerebras, with its massive on-chip memory bandwidth, delivers roughly twice the speed of NVIDIA’s latest for large models.

Energy consumption is emerging as an architectural driver. US data centers consumed 183 terawatt-hours in 2024. AI energy consumption alone may reach 134 terawatt-hours annually by 2026 — equivalent to Sweden’s total consumption. This thermodynamic pressure favors architectures that activate only a fraction of their parameters per query: mixture-of-experts models, aggressive quantization, and potentially neuromorphic approaches.

For professional AI applications, the hardware trajectory has a concrete implication: inference costs will determine which AI tools are commercially viable at scale. The architectures that deliver accurate results with the least compute per query — exactly the efficiency that hybrid search and targeted retrieval provide — will win the deployment race.

What this means for AI in regulated industries

Five paradigms are converging. Hybrid architectures deliver speed and scale. Inference-time reasoning delivers depth on hard problems. World models promise causal understanding. Self-improvement loops reduce dependence on human annotation. Hardware constraints force efficiency.

For professionals who depend on AI for research — in tax, legal, compliance — three things follow.

First, accuracy will improve not from bigger models but from better reasoning architectures. Systems that can invest more compute on harder queries, verify their own conclusions, and report genuine uncertainty will replace systems that process every question the same way.

Second, retrieval-based systems become more important, not less. As language models hit data walls and scaling plateaus, the ability to search, rank, and cite from authoritative sources — the core of what Auryth builds — becomes the differentiator rather than the training data alone.

Third, the cost of AI inference will shape which tools survive commercially. Architectures that deliver professional-grade accuracy at sustainable compute costs will outcompete both cheap-but-unreliable chatbots and expensive-but-brilliant frontier models. The sweet spot is retrieval-augmented systems with targeted reasoning — precise where it matters, efficient everywhere else.

The era of “just make it bigger” is over. The era of making it smarter has begun.